The Advanced Tri-lab Software Environment (ATSE) is an integrated software environment that provides a software ecosystem for leading-edge prototype high-performance computing (HPC) systems. By leveraging vendor software alongside open-source software throughout the stack, ATSE continually pushes forward the computing environment experience for the Advanced Simulation and Computing (ASC) program on next-generation systems.

Prototype systems often have gaps in the software available from vendors or from open-source development. The ATSE team works to provide a productive user environment in the early days of system availability by porting software and reference implementations, making upstream contributions, and working with vendors and open-source developers to provide deeply characterized bug reports. As the ecosystem around leading-edge systems continues to develop, ATSE integrates components from different sources and vendors to provide a complete experience to the ASC user. When possible, multiple sources for a software capability are provided to allow for experimentation and operational flexibility.

By concentrating on the software ecosystem challenges and opportunities presented by advanced architecture systems, prototype systems, and testbeds, ATSE aims to drive advancements in the breadth and effectiveness of computing systems available to ASC. This initiative not only enhances the overall performance and capabilities of HPC systems but also empowers vendors to add value within a cohesive and collaborative framework, ultimately fostering excellence in computational science and engineering.

Albany is an implicit, unstructured grid, finite element code for the solution and analysis of partial differential equations. Albany is the main demonstration application of the AgileComponents software development strategy at Sandia. It is a PDE code that strives to be built almost entirely from functionality contained within reusable libraries (such as Trilinos/STK/Dakota/PUMI). Albany plays a large role in demonstrating and maturing functionality of new libraries, and also in the interfaces and interoperability between these libraries. It also serves to expose gaps in our coverage of capabilities and interface design.

In addition to the component-based code design strategy, Albany also attempts to showcase the concept of Analysis Beyond Simulation, where an application code is developed up from for a design and analysis mission. All Albany applications are born with the ability to perform sensitivity analysis, stability analysis, optimization, and uncertainty quantification, with a clean interfaces for exposing design parameters for manipulation by analysis algorithms.

Albany also attempts to be a model for software engineering tools and processes, so that new research codes can adopt the Albany infrastructure as a good starting point. This effort involves a close collaboration with the 1400 SEMS team.

The Albany code base is host to several application projects, notably:

- LCM (Laboratory for Computational Mechanics) [PI J. Ostien]: A platform for research in finite deformation mechanics, including algorithms for failure and fracture modeling, discretizations, implicit solution algorithms, advanced material models, coupling to particle-based methods, and efficient implementations on new architectures.

- QCAD (Quantum Computer Aided Design) [PI Nielsen]: A code to aid in the design of quantum dots from built in doped silicon devices. QCAD solves the coupled Schoedinger-Poisson system. When wrapped in Dakota, optimal operating conditions can be found.

- FELIX (Finite Element for Land Ice eXperiments) [PI Salinger]: This application solves variants of a nonlinear Stokes flow for simulating the evolution of Ice Sheets. In particular, it conducts climate modeling of the Greenland and Antarctic Ice Sheets for Sea-Level Rise. Will be linked into ACME.

- Aeras [PI Spotz]: A component-based approach to atmospheric modeling, where advanced analysis algorithms and design for efficient code on new architectures are built into the code.

In addition, Albany is used as a platform for algorithmic research:

- FASTMath SciDAC project: We are developing a capability for adaptive mesh refinement within an unstructured grid application, in collaboration with Mark Shephard’s group at the SCOREC center at RPI.

- Embedded UQ: Research into embedded UQ algorithms led by Eric Phipps often uses Albany as a demonstration platform.

- Performance Portable Kernels for new architectures: Albany is serving as a research vehicle for programming finite element assembly kernels using the Trilinos/Kokkos programming model and library.

E3SM is an unprecedented collaboration among seven National Laboratories, the National Center for Atmospheric Research, four academic institutions and one private-sector company to develop and apply the most complete leading-edge Earth system model to the most challenging and demanding subseasonal to decadal predictability imperatives. It is the only major national modeling project designed to address U.S. Department of Energy (DOE) mission needs and specifically targets DOE Leadership Computing Facility resources now and in the future, because DOE researchers do not have access to other major climate computing centers. A major motivation for the E3SM project is the coming paradigm shift in computing architectures and their related programming models as capability moves into the Exascale era. DOE, through its science programs and early adoption of new computing architectures, traditionally leads many scientific communities, including climate and Earth system simulation, through these disruptive changes in computing.

The FASTMath SciDAC Institute develops and deploys scalable mathematical algorithms and software tools for reliable simulation of complex physical phenomena and collaborates with application scientists to ensure the usefulness and applicability of FASTMath technologies.

FASTMath is a collaboration between Argonne National Laboratory, Lawrence Berkeley National Laboratory, Lawrence Livermore National Laboratory, Massachusetts Institute of Technology, Rensselaer Polytechnic Institute, Sandia National Laboratories, Southern Methodist University, and University of Southern California. Dr. Esmond Ng, LBNL, leads the FASTMath project.

HPC resource allocation consists of a pipeline of methods by which distributed-memory work is assigned to distributed-memory resources to complete that work. This pipeline spans both system and application level software. At the system level, it consists of scheduling and allocation. At the application level, broadly speaking, it consists of discretization (meshing), partitioning, and task mapping. Scheduling, given requests for resources and available resources, decides which request will be assigned resources next or when a request will be assigned resources. When a request is granted, allocation decides which specific resources will be assigned to that request. For the application, HPC resource allocation begins with the discretization and partitioning of the work into a distributed-memory model and ends with the task mapping that matches the allocated resources to the partitioned work. Additionally, network architecture and routing have a strong impact on HPC resource allocation. Each of these problems is solved independently and makes assumptions about how the other problems are solved. We have worked in all of these areas and have recently begun work to combine some of them, in particular allocation and task mapping. We have used analysis, simulation, and real system experiments in this work. Techniques specific to any particular application have not been considered in this work.

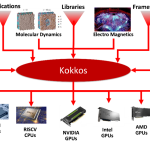

Modern high-performance computing (HPC) architectures have diverse and heterogeneous types of execution and memory resources. For applications and domain-specific libraries/languages to scale, port, and perform well on these architectures, their algorithms must be re-engineered for thread scalability and performance portability. The Kokkos programming system enables HPC applications and domain libraries to be implemented only once, while being performance portable across diverse architectures such as multicore CPUs, GPUs, and APUs.

This research, development, and deployment project advances the Kokkos programming system with new intra-node parallel algorithm abstractions, implements these abstractions in the Kokkos library, and supports applications’ and domain libraries’ effective use of Kokkos through consulting and tutorials. Kokkos is an open-source project in the Linux Foundation, with the primary development support coming from Sandia National Laboratories, Oak Ridge National Laboratory, and the French Alternative Energies and Atomic Energy Commission.

Achieving practical exascale supercomputing will require massive increases in energy efficiency. The bulk of this improvement will likely be derived from hardware advances such as improved semiconductor device technologies and tighter integration, hopefully resulting in more energy efficient computer architectures. Still, software will have an important role to play. With every generation of new hardware, more power measurement and control capabilities are exposed. Many of these features require software involvement to maximize feature benefits. This trend will allow algorithm designers to add power and energy efficiency to their optimization criteria. Similarly, at the system level, opportunities now exist for energy-aware scheduling to meet external utility constraints such as time of day cost charging and power ramp rate limitations. Finally, future architectures might not be able to operate all components at full capability for a range of reasons including temperature considerations or power delivery limitations. Software will need to make appropriate choices about how to allocate the available power budget given many, sometimes conflicting considerations. For these reasons, we have developed a portable API for power measurement and control.

IO libraries typically focus on writing the entire simulation domain for each output. For many computation classes, this is the correct choice. However, there are some cases where this approach is wasteful in time and space.

The Stitch library was developed initially for use with the SPPARKS kinetic monte carlo simulation to handle IO tasks for a welding simulation. This simulation type has a particular feature where there is computational intensity in a small part of the simulation domain with the rest being idle. Given this intensity, only writing the area that changes is far more space efficient than writing the entire simulation domain for each output. Further, the computation can be focused strictly on the area where the data will change rather than the whole domain. This can yield a reduction from 1024 to 16 processes and 1/64th the data written. These combined can lead to a reduction in the computation time with no loss in data quality. If anything, by reducing the amount written each time, more output is possible.

This approach is also applicable for finite element codes that share the same localized physics.

The code is in the final stages of copyright review and will be released on github.com. A work in progress paper was presented at PDSW-DISCS @ SC18 and a full CS conference paper is planned for H1 2019 and a follow-on materials science journal paper.

Progressive data storage IO library. This library enables computing on a small part of the simulation domain at a time and then stitching together a coherent domain view based on a time epoch on request. Initial demonstration is for metal additive manufacturing. Paper at IPDPS 2020: DOI: 10.1109/IPDPS47924.2020.00016

The Structural Simulation Toolkit (SST) is a tool used to simulate large-scale and high-performance computing platforms. It allows users to design and test both the hardware (the physical parts of a computer) and software (the programs that run on the computer) together, seeing how they work with each other. This helps researchers understand and study new ideas in computer design. Design points such as instruction set architecture, memory systems, network interfaces, and the full system network can be studied together with programming models and algorithms.

SST has two differentiating features. First, it is designed to be modular which enables mixing and matching a variety of simulation models. This allows users to change and test specific parts of the system more easily. Second, it runs simulations in parallel, using common techniques including message passing (MPI) and threading. This makes it very efficient for simulating large and complicated systems. SST has been successfully used to study various concepts, including advanced memory processing and traditional processors that connect through high-speed networks.

The Trilinos Project is an effort to develop algorithms and enabling technologies within an object-oriented software framework for the solution of large-scale, complex multi-physics engineering and scientific problems. A unique design feature of Trilinos is its focus on packages.

The Vanguard project is expanding the high-performance computing ecosystem by evaluating and accelerating the development of emerging technologies in order to increase their viability for future large-scale production platforms. The goal of the project is to reduce the risk in deploying unproven technologies by identifying gaps in the hardware and software ecosystem and making focused investments to address them. The approach is to make early investments that identify the essential capabilities needed to move technologies from small-scale testbed to large-scale production use.

The Zoltan project focuses on parallel algorithms for parallel combinatorial scientific computing, including partitioning, load balancing, task placement, graph coloring, matrix ordering, distributed data directories, and unstructured communication plans.

The Zoltan toolkit is an open-source library of MPI-based distributed memory algorithms. It includes geometric and hypergraph partitioners, global graph coloring, distributed data directories using rendezvous algorithms, primitives to simplify data movement and unstructured communication, and interfaces to the ParMETIS, Scotch and PaToH partitioning libraries. It is written in C and can be used as a stand-alone library.

The Zoltan2 toolkit is the next-generation toolkit for multicore architectures. It includes MPI+OpenMP algorithms for geometric partitioning, architecture-aware task placement, and local matrix ordering. It is written in templated C++ and is tightly integrated with the Trilinos toolkit.

CrossSim is a GPU-accelerated, Python-based crossbar simulator designed to model analog in-memory computing for any application that relies on matrix operations. This includes neural networks, signal processing, solving linear systems, and many more. It is an accuracy simulator and co-design tool that was developed to address how analog hardware effects in resistive crossbars impact the quality of the algorithm solution.

CrossSim has a Numpy-like API that allows different algorithms to be built on resistive memory array building blocks. CrossSim cores can be used as drop-in replacements for Numpy in application code to emulate deployment on analog hardware. CrossSim also provides both Torch and Keras compatible implementations of many analog-compatible neural network layers including linear and convolutional layers.

CrossSim can model device and circuit non-idealities such as arbitrary programming errors, conductance drift, cycle-to-cycle read noise, and precision loss in analog-to-digital conversion (ADC). It also uses a fast, internal circuit simulator to model the effect of parasitic metal resistances on accuracy.

CrossSim also supports simulating systems with a wide range of data representation strategies including using multiple devices per value (i.e., weight bit slicing) and multiple approaches to representing negative numbers. Each of these components provides a modular interface to allow users to quickly implement models of new research ideas and explore the effects within the context of a larger applications.

Dakota: Optimization and Uncertainty Quantification Algorithms for Design Exploration and Simulation Credibility.

The Dakota toolkit provides a flexible, extensible interface between analysis codes and iterative systems analysis methods. Dakota contains algorithms for:

- optimization with gradient and nongradient-based methods;

- uncertainty quantification with sampling, reliability, stochastic expansion, and epistemic methods;

- parameter estimation with nonlinear least squares methods; and

- sensitivity/variance analysis with design of experiments and parameter study methods.

These capabilities may be used on their own or as components within advanced strategies such as hybrid optimization, surrogate-based optimization, mixed integer nonlinear programming, or optimization under uncertainty.

LAMMPS, an acronym for Large-scale Atomic/Molecular Massively Parallel Simulator, is a classical molecular dynamics code with a focus on materials modeling.

LAMMPS has potentials for solid-state materials (metals, semiconductors) and soft matter (biomolecules, polymers) and coarse-grained or mesoscopic systems. It can be used to model atoms or, more generically, as a parallel particle simulator at the atomic, meso, or continuum scale.

LAMMPS runs on single processors or in parallel using message-passing techniques and a spatial-decomposition of the simulation domain. Many of its models have versions that provide accelerated performance on CPUs, GPUs, and Intel Xeon Phis. The code is designed to be easy to modify or extend with new functionality.

Peridigm is an open-source computational peridynamics code. It is a massively-parallel simulation code for implicit and explicit multi-physics simulations centering on solid mechanics and material failure. Peridigm is a C++ code utilizing foundational software components from Sandia’s Trilinos project and is fully compatible with the Cubit mesh generator and Paraview visualization codes.

CIME is the Common Infrastructure for Modelling the Earth. It is the full-featured software engineering system for global Earth system or climate models. CIME is a set Python scripts configured with XML data files as well as Fortran soure code, and owns the model configuration, build system, test harness, test suites, portability to many specific HPC platforms, input data set management, results archiving.CIME is jointly developed by NCAR, Sandia, and Argonne, and is the software engineering system for several important climate/weather models: E3SM, CESM, and UFS.