High Consequence, Automation, & Robotics (HCAR) specializes in the development of advanced perception technologies and decision tools that enable robotic and unmanned systems to perform more complex and autonomous tasks, such as simultaneous localization and mapping of a building. Our perception capabilities include 3-D geometric modeling and texture mapping, 3-D video motion detection, Simultaneous Localization and Mapping (SLAM), and augmented reality training.

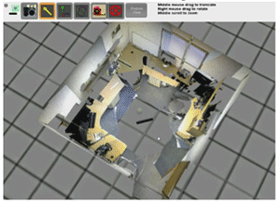

3-D World Model Building

Forensics analysis, crime scene investigation, emergency response, and building restoration all require a scene model. Sandia’s 3-D World Modeling provides a 3-D context for sensor measurements, length and volume calculations, operational planning, and coverage analysis.

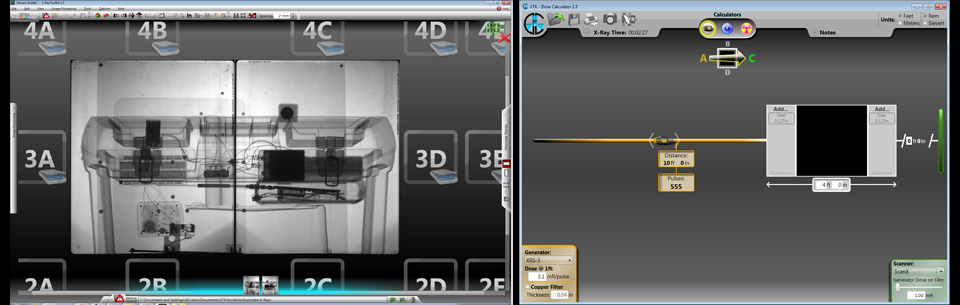

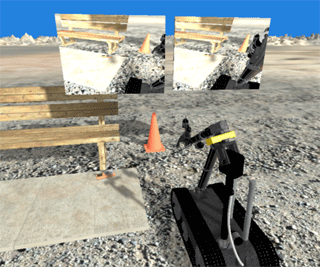

Visual Targeting

Visual Targeting uses triangulation data from a pair of video cameras pointing at a target to define points in space. The operator chooses an action which invokes a motion planner acting upon the location data and then drives the remote robot vehicle accordingly. It combines what the operator is able to do — analyze complex video scenes, identify features of interest, and determine courses of action — with what the camera and robot system can do — accurately measure positions and offsets and plan compatible manipulator motion paths.

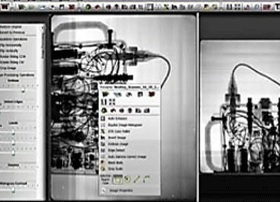

EOD Decision Support Tools

One of the most prominent applications of unmanned systems has been in the area of Explosive Ordnance Disposal (EOD) such as Improvised Explosive Device (IED) defeat. Often, due to the limitations of currently available unmanned systems technology EOD teams have to assist the unmanned system with the mission, thus putting them in harm’s way. HCAR specializes in the development of advanced decision tools and software that enable these unmanned systems to perform the mission more effectively, thus removing people from harm’s way.